When I first tried to understand Mira Network, I realized I was approaching it the wrong way. I kept thinking of it as another blockchain project competing for attention. But the more I read, the more it felt like something different. It wasn’t trying to be louder or faster. It was trying to solve a quiet but serious problem that most people only notice when something goes wrong.

AI is impressive, but it makes mistakes with confidence. It can sound certain while being completely incorrect. In everyday use that’s inconvenient, but in finance or compliance, that kind of error can cause real damage. The idea behind Mira started to make sense when I stopped asking how innovative it was and started asking why it needed to exist.

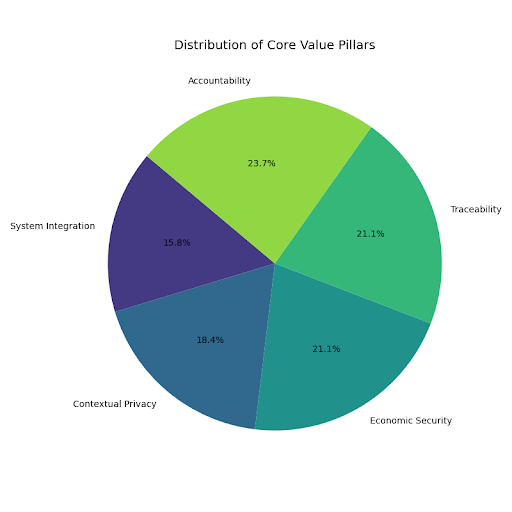

What I slowly understood is that Mira doesn’t try to make AI smarter. Instead, it tries to make AI accountable. It takes what an AI produces and breaks it into smaller claims. Those claims are then checked across a distributed network of independent models and validators. Instead of trusting one system, the result is shaped by many, and backed by economic incentives.

At first, I thought verification meant proving something was absolutely true. Over time, I realized that’s not realistic. In real institutions, what matters more is traceability. If something is questioned later, there needs to be a record of how it was evaluated. Mira seems to focus on that—creating structured evidence that a process happened responsibly.

Privacy was another concept I had to rethink. I used to imagine privacy in absolute terms: either data is hidden or it’s public. But finance doesn’t work that way. Different people see different layers of information. Mira’s approach feels more contextual than ideological. It’s about proving validation occurred without exposing everything.

The updates I’ve noticed about the project aren’t flashy. They talk about improving node reliability, refining metadata standards, and strengthening observability tools. None of this trends on social media. But when I imagine a compliance team depending on the system, those details feel important. Stability matters more than excitement.

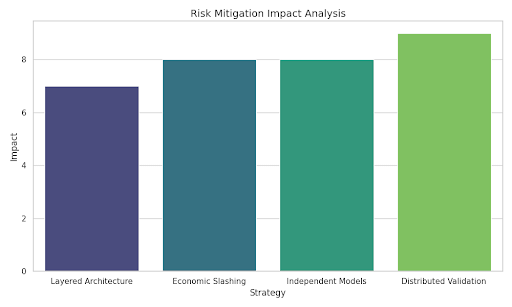

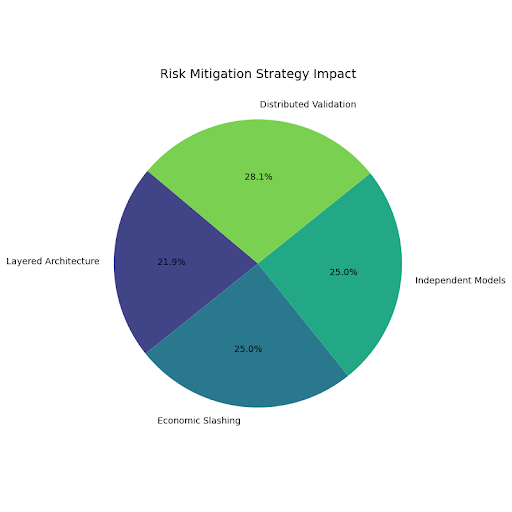

I also spent time trying to understand the token mechanics. Validators stake tokens to participate in verification. If they act dishonestly or carelessly, they risk losing their stake. That structure forces participants to treat verification seriously. It’s less about speculation and more about responsibility tied to economic weight.

The validator structure itself is layered. Independent AI models evaluate claims, and validators observe and aggregate those results. That diversity reduces the risk of one model’s bias dominating the outcome. It feels closer to risk management than to hype-driven decentralization. Multiple perspectives reduce single points of failure.

There are compromises too, and I’ve come to see them as necessary.Compatibility with existing systems, gradual migration phases, and practical deployment choices show that the project understands reality. Institutions don’t replace infrastructure overnight. They test, observe, and integrate slowly. Mira seems designed with that patience in mind.

Looking forward, I imagine the network becoming more integrated into AI pipelines used in finance. Not in a dramatic way, but quietly embedded in workflows that require auditability. Stronger dispute mechanisms, better metadata, and refined slashing rules feel like natural progress. None of it is glamorous, but all of it is useful.

What has changed for me is not excitement, but clarity. I don’t see Mira as a revolutionary platform anymore. I see it as a structured response to the growing pressure of using AI in environments where mistakes are costly. It’s trying to make AI outputs something institutions can stand behind.

The more I reflect on it, the more the design feels intentional. It accepts trade-offs, builds patiently, and focuses on accountability over attention. I’m not swept up by big promises. Instead, I’m slowly becoming comfortable with the logic behind it. And that quiet confidence feels more durable than hype ever could.