There was a time I sat down with a thick “AI verified” report, but when I opened the logs, the model version running in production didn’t match the hash described in the document, and I could only sigh because I’d seen this story too many times. Since then, I’ve treated “AI verification” as a game of boundaries: where you draw the boundary is where you accept the risk.

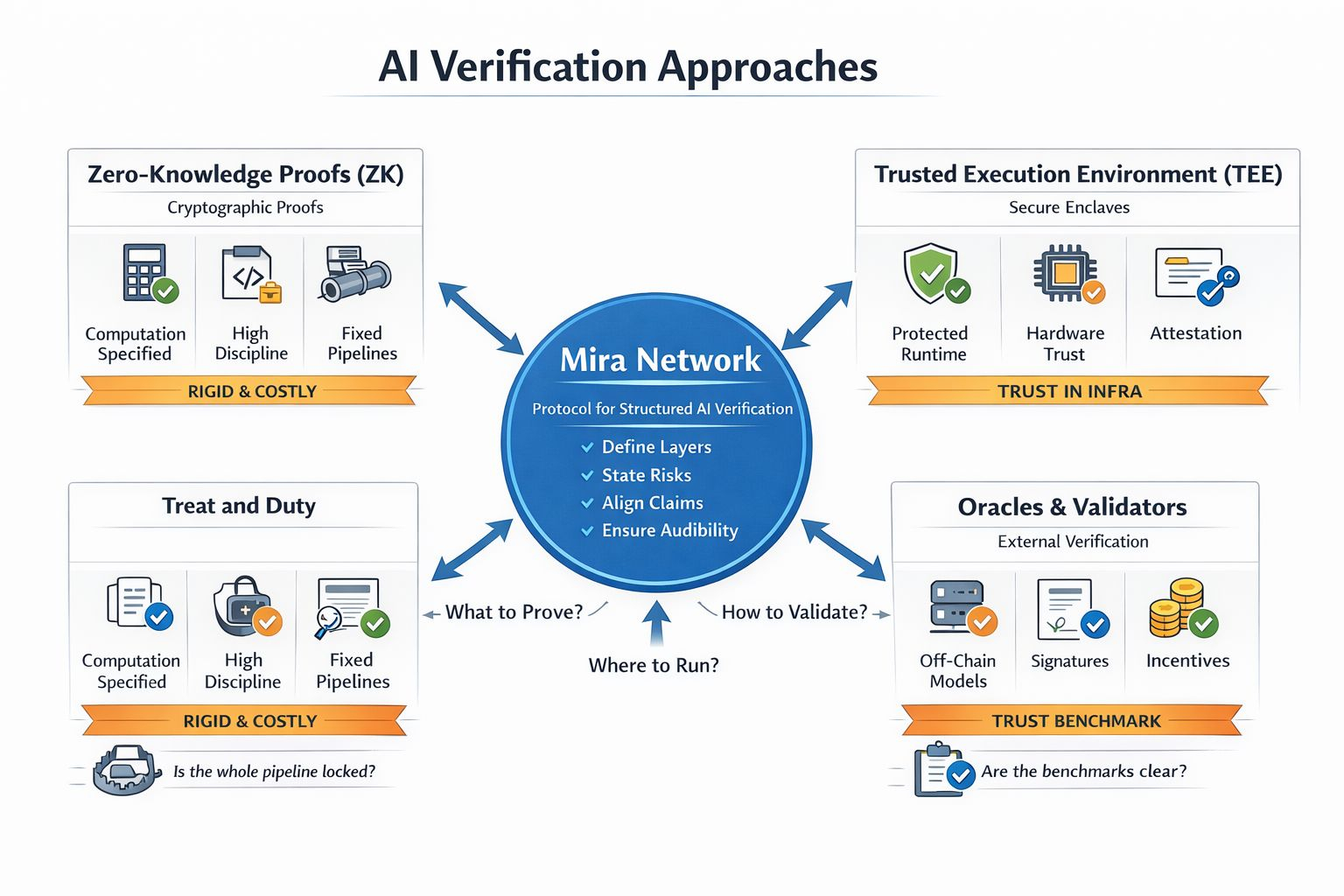

A lot of people in crypto hear “verification” and imagine a world where you don’t have to trust anyone, but AI makes me question that very phrase. AI isn’t a single computation. It’s a chain of tiny technical choices that add up to big consequences: whether the input data was poisoned, whether preprocessing was altered, whether the model version is correct, whether the runtime environment was tampered with, and, crucially, what “correct” even means under which standard. That framing is why Mira Network keeps coming up for me: it forces the conversation away from slogans and back to layers.

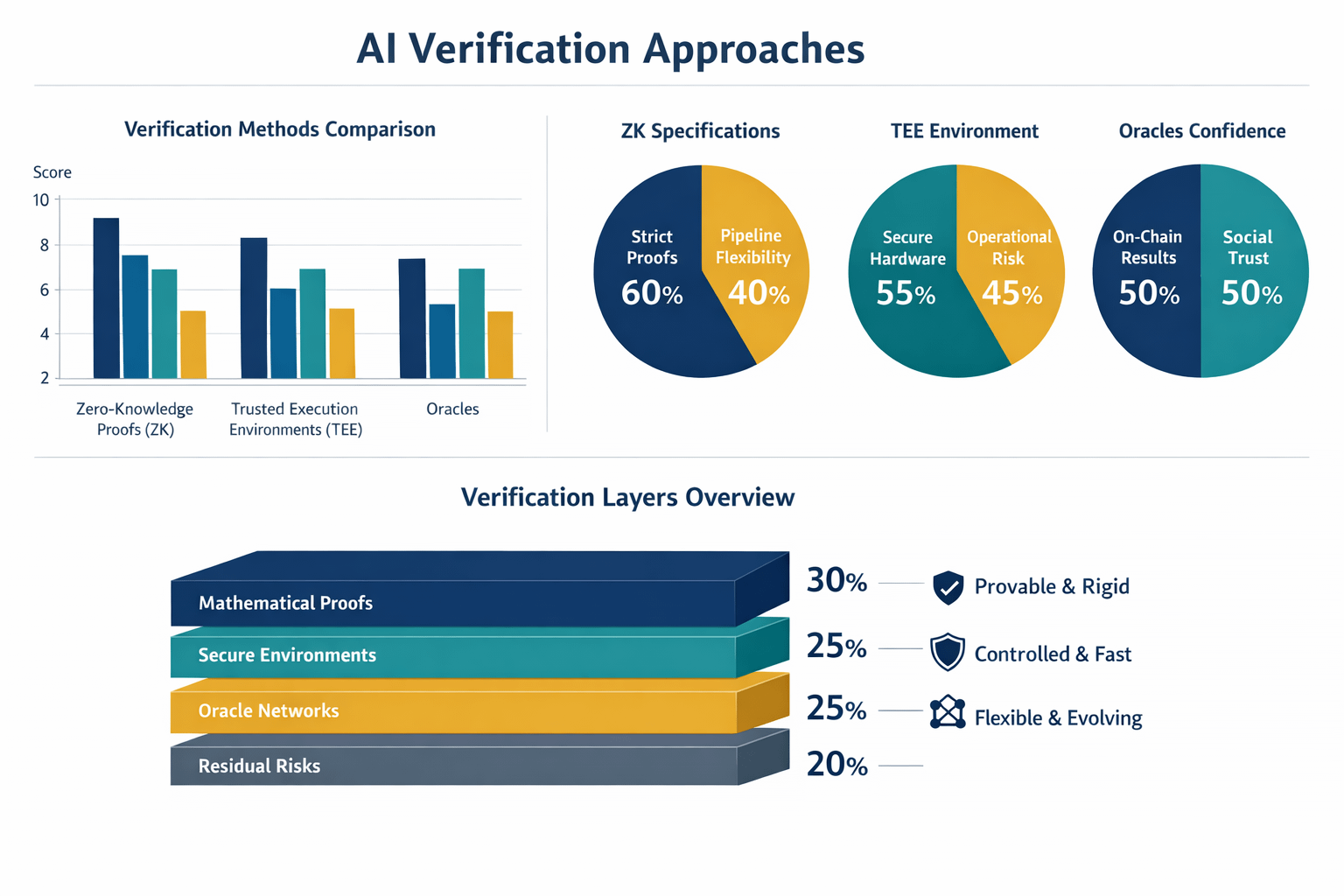

With ZK, the appeal is that you can push verification back into mathematics. You prove that a computation ran according to a specification, outsiders can verify it, and you don’t have to trust the operator. But the trap is in the word “specification.” Real-world AI inference isn’t just a forward pass. It pulls in tokenization, normalization, batching, weight selection, runtime configuration, even numerical handling. If any step outside the spec changes, the proof can still be “correct” for what you proved, while the product is “wrong” for what users thought they were getting. ZK is powerful when you’re disciplined enough to lock down everything that matters; without that discipline, it becomes a shield that hides blind spots.

TEE goes the other way and says it plainly: don’t turn everything into a proof, make sure it runs inside an environment you can attest. For AI, TEE is often the sensible choice when you need throughput, latency, and a pipeline that stays close to its original shape. Honestly, I don’t dismiss TEE; I’m wary of the overconfidence it can create. TEE doesn’t eliminate trust, it relocates trust to hardware, firmware, the vendor, the operational stack, key management, monitoring. When something goes wrong, you’re not only debugging the model, you’re debugging the organization.

Oracles look like the fastest path: a group runs the model, scores the output, posts the result on-chain, and incentives are designed to keep them honest. In many use cases, an oracle is enough, because you don’t always need “absolute truth,” sometimes you just need “enough confidence to act.” But AI makes oracles far more complicated than price feeds. “Correct” according to which benchmark, which dataset, which metric, and who gets to update the standard as models evolve. When the standard is fuzzy, a signature only says “someone claimed this,” not what level of assurance that claim deserves in a given context.

Between those three paths, Mira Network caught my attention because it tries to change how the question is framed. Instead of declaring one technology will beat everything, Mira Network shifts the focus to describing and reconciling verification layers. In practice, that means Mira Network nudges teams to state clearly what parts they can prove, what parts they protect with an execution environment, what parts rely on social attestation, and what remains as residual risk. If you can’t name the residual risk, you can’t price it, and you certainly can’t explain it to users.

The way I sanity-check systems like this is by looking for “bait-and-switch” points. ZK often gets baited and switched between computation and pipeline. TEE gets baited and switched between attestation and operations. Oracles get baited and switched between signatures and the standard of correctness. Mira Network, if executed well, reduces the room for bait and switch by making the verification target explicit, traceable, and comparable across implementations. That’s the sort of boring clarity I’ve learned to respect.

I think the future of AI verification won’t belong to a single camp. It will be layered: ZK for parts that can be tightly specified, TEE for performance-critical parts, oracles for parts tied to the outside world, and Mira Network as a way to keep the assumptions readable and the claims testable. It sounds less romantic, but the market has taught me that what survives is what can endure disputes.

And if you had to choose an AI verification design for a product with real money flowing through it, would you prioritize maximum rigor, maximum speed, or maximum transparency of assumptions so users know exactly what they’re trusting.